Customer Communication Checklist and Understanding User Flows - Part 7

- Harshal Patil

- Jan 18, 2022

- 5 min read

In the previous article, we looked at possible solutions that could help us communicate with our customers about new features at a more personalized level. Since it would be impossible to talk to each customer, sending email blasts with the right information was our best way out.

In this article, we will look at other strategies that the team and I explored to improve the adoption of new features early enough in the launch cycle. If you’ve missed the earlier parts - here is the introductory article.

I originally published this in my newsletter on Substack. You can subscribe there to get new articles straight to your inbox.

Thumbnail credits to Background vector created by freepik and UX Collective.

Pilot Customer List for Next Release

We built a pilot (or beta) list of customers for the next releases to “beta test” before launching the next feature.

The next step was to go through support queries from customers and reach out to the customers who had issues that would get solved from a feature launch. Requests from sales reps (a.k.a. account managers) about a particular feature also came in handy at this point. So, we needed to go through these requests and get back to them and their customers.

These customers needed to be enrolled in a pilot or beta launch phase of a new feature being launched so that the feature can be tested and feedback can be uncovered (the unknown unknowns). For example, we’d discussed in the previous article how users of a feature do not log in to our web portal so cannot use the feature.

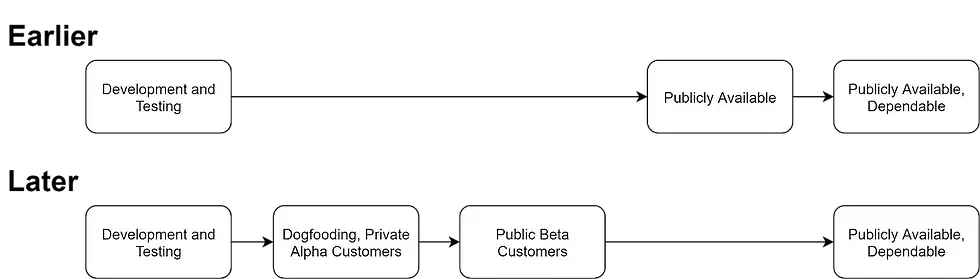

I realize this is a common launch step for features across companies. However, my organization used to release features publically directly as an MVP (Minimum Viable Product). The change to launch it in secret to only a select few customers was an organizational change.

We used this approach for another feature being launched. We launched the feature privately to a select few customers and reached out to a few more to request them to try it out. It showed the benefits to the organization and helped encourage other PMs to try this approach.

Problem — The workstream of identifying customers to reach out to, reaching out to customers for broadcasting FAQs, adding a new feature, or a new regulatory requirement, is still a lot of effort and has potential for mistakes. How to create a playbook for it?

Built a Customer Communication Checklist

For future feature launches, we built a checklist for communicating with customers. We’ll cover this in detail in a future article. Here is a summary of it:

Identify customers. Uncover customers impacted based on product metrics, support queries, and sales insights.

Communicate in-situ. Add information of a launch to different parts of the product.

Enable go-to-market (GTM). Provide information to account managers (a.k.a. sales reps) to share with their customers or answer questions.

Enable Support. Provide information, enable shortcuts, deliberate on escalation policies for customer support to handle customer queries.

Announce launch. Announce the product launch in a regular launch updates email to customers, annual conferences, webinars, etc.

Soon, I came across a breaking change (a change that would’ve disrupted customer usage of our product unless they act on it) being introduced in a product. Along with a few cross-functional stakeholders, I had the opportunity to use this checklist for communicating this change and it helped streamline the process plus provide a playbook for future launches. I found the detailed checklist approach very beneficial and reinforced my learning by reading the checklist manifesto book.

Problem — to improve the success of such playbooks, we need to know which steps are more important than the rest. For that, we need to understand their effectiveness in informing customers. How do we understand that?

Understand Direction of Flow of Users

Image from UX Collective, although it is of a B2C app. Some of the most expensive steps in the checklist are to modify the product web portal, documents, and/or transactional emails to inform customers about the changes. It is expensive because

It takes engineering time and

The changes need to be reverted after some period of time

This expense can likely be reduced by building or using software that makes it easy to add feature launch notifications in the product in multiple formats. Back when I came across this problem, the company did not have a company-wide solution for this. So, I instead wanted to see what product metrics can help understand the directionality of user flow so that we can use the limited resources judiciously. What do customers see? When they see something, what do they do?

I used product analytics software to see this. Examples of software you could use for product analytics are UXCam, Mixpanel, Google Analytics, Heap, Adobe Analytics, Amplitude, Pendo, and Gainsight PX. The software enabled me to see:

How many users go to a page in the web portal (e.g. the new feature page)?

What is the distribution of sources for users to come to that page?

What links do users click when on other pages to come to this page?

How often do users click on the how-to article links on the pages?

How often do users come from the how-to article to the page in the web portal?

How is the usage of the feature spread across geography?

The metrics from the tool pointed out that statistically:

A few users went from the page to the how-to article

A few users went to the page from the how-to article

Most users seemed to come to the page from other pages near it in the hierarchy

This information was not sufficient because it did not help make any decision. Even though most users are coming from adjacent pages, there is no design decision that’ll change due to it.

Even if only a few users are coming from other sources - that doesn’t mean a how-to article won’t be written. This means we need to deep-dive into the clicks of the minority because that is the only place where there is a variance.

Amongst the takeaways of further deep-dive, one example was as follows. Very few users were clicking on a large font link in the web portal’s homepage to go to the self-service feature page. The link provided only 1 snippet, for example, “Edit Settings A”. Whereas there were many capabilities in the self-service feature. So, it was possible that users noticed they could change Settings A on their own but did not care about Settings A.

Cross-checking this information with support queries from customers suggested that customers were not aware that they could change Settings B, C, or D on their own via the web portal.

Another example was that when a feature was not available or users faced an error message due to input validation, they were very likely to click on a “read more” link to read the how-to article that explained the issue in more detail.

Next Up…

I’ve kept this article shorter than previous ones as this is an appropriate end narration of my team’s experience in improving customer experience and start retrospective on areas of improvement.

Next, we will look at other options that could be explored to provide a solution to the understanding customer behavior dilemma whereas improving their experience and satisfaction with our product.

Originally published at https://harshalpatil.substack.com on Dec 28, 2021